Validate data: Keep data that have values in the expected ranges and reject any that do not.Take data from a range of sources, such as APIs, non/relational databases, XML, JSON, CSV files, and convert it into a single format for standardized processing. Extract data from different sources: the basis for the success of subsequent ETL steps is to extract data correctly.

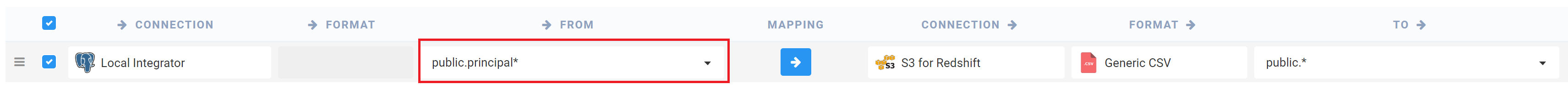

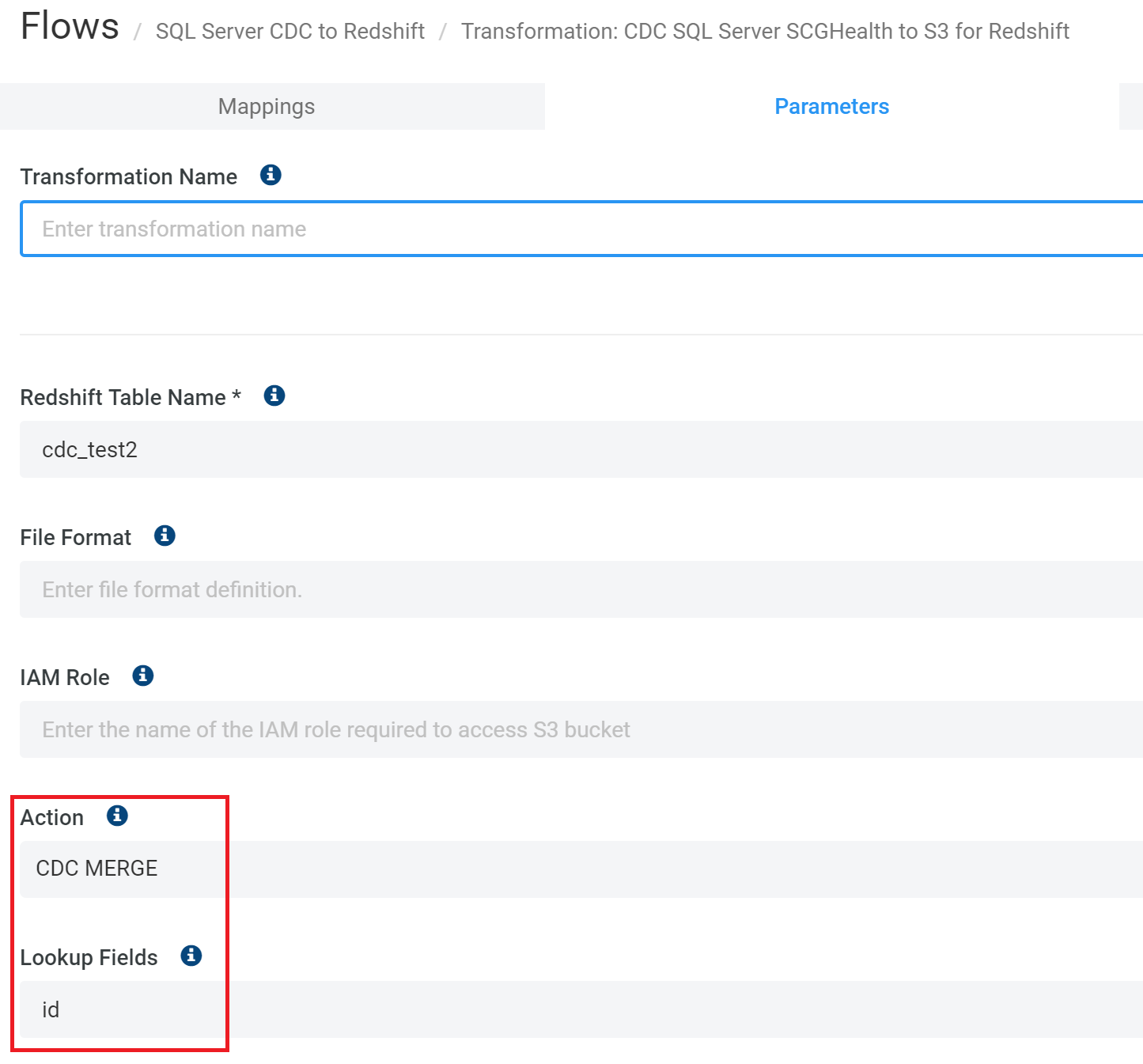

For example, in a country data field, specify the list of country codes allowed. Create reference data: create a dataset that defines the set of permissible values your data may contain.To build an ETL pipeline with batch processing, you need to: It’s challenging to build an enterprise ETL workflow from scratch, so you typically rely on ETL tools such as Stitch or Blendo, which simplify and automate much of the process. In a traditional ETL pipeline, you process data in batches from source databases to a data warehouse. Building an ETL Pipeline with Batch Processing Let’s start by looking at how to do this the traditional way: batch processing. This process is complicated and time-consuming. Then you must carefully plan and test to ensure you transform the data correctly. When you build an ETL infrastructure, you must first integrate data from a variety of sources. ETL typically summarizes data to reduce its size and improve performance for specific types of analysis. What is ETL (Extract Transform Load)?ĮTL (Extract, Transform, Load) is an automated process which takes raw data, extracts the information required for analysis, transforms it into a format that can serve business needs, and loads it to a data warehouse. For the former, we’ll use Kafka, and for the latter, we’ll use Panoply’s data management platform.īut first, let’s give you a benchmark to work with: the conventional and cumbersome Extract Transform Load process. The other is automated data management that bypasses traditional ETL and uses the Extract, Load, Transform (ELT) paradigm. One such method is stream processing that lets you deal with real-time data on the fly. Well, wish no longer! In this article, we’ll show you how to implement two of the most cutting-edge data management techniques that provide huge time, money, and efficiency gains over the traditional Extract, Transform, Load model. 3 Ways to Build ETL Process Pipelines with ExamplesĪre you stuck in the past? Are you still using the slow and old-fashioned Extract, Transform, Load (ETL) paradigm to process data? Do you wish there were more straightforward and faster methods out there?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed